Context

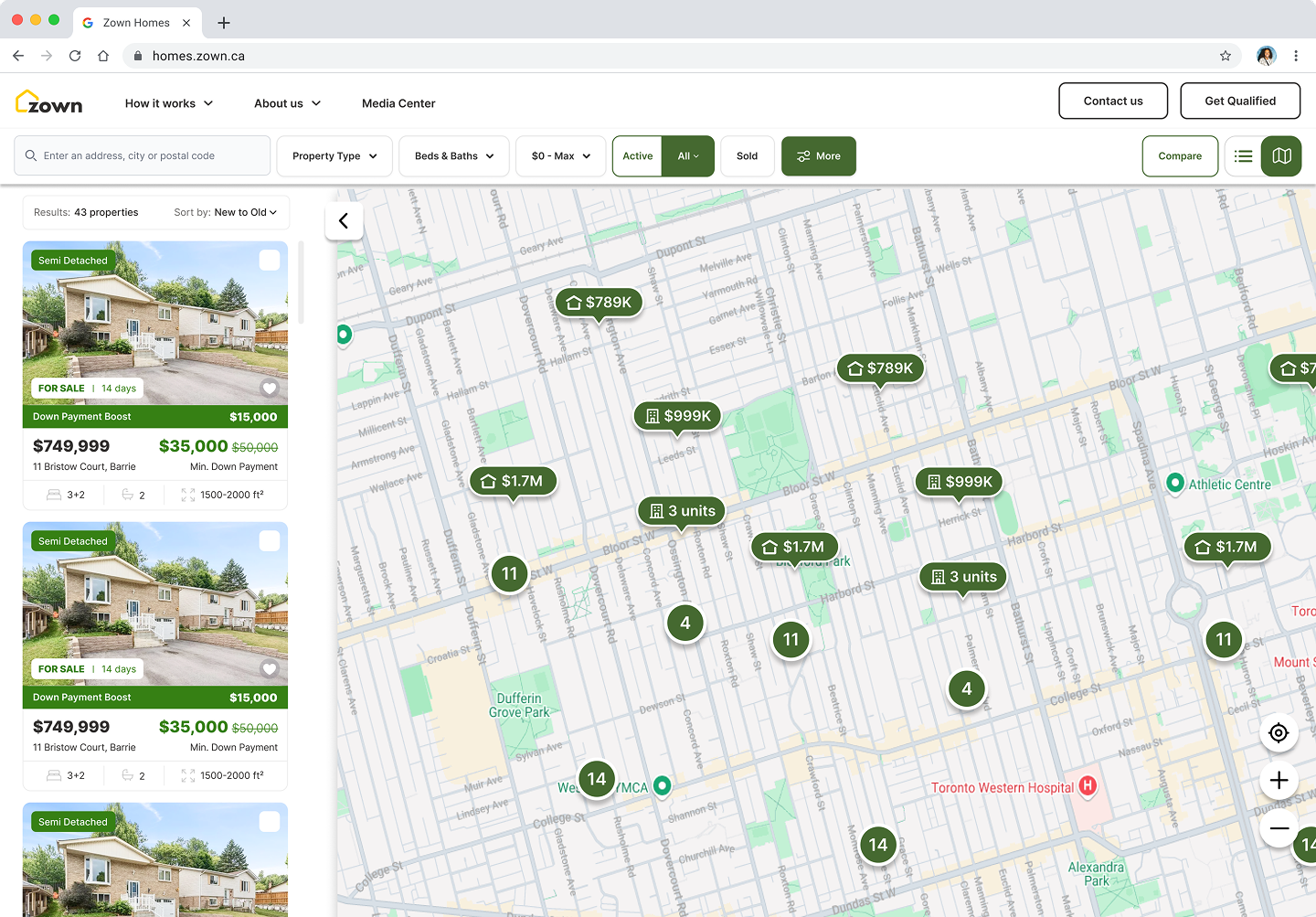

Zown's existing search was outdated, visually and functionally. Users came in from ads, couldn't find what they wanted, and left. There was nothing that made the product worth returning to. We were paying to acquire users we couldn't retain.

I had already improved conversion at the top of the funnel by redesigning the qualification form (case study). But even converted users needed somewhere to go. If the core product wasn't useful, we were just leaking them further down the funnel.

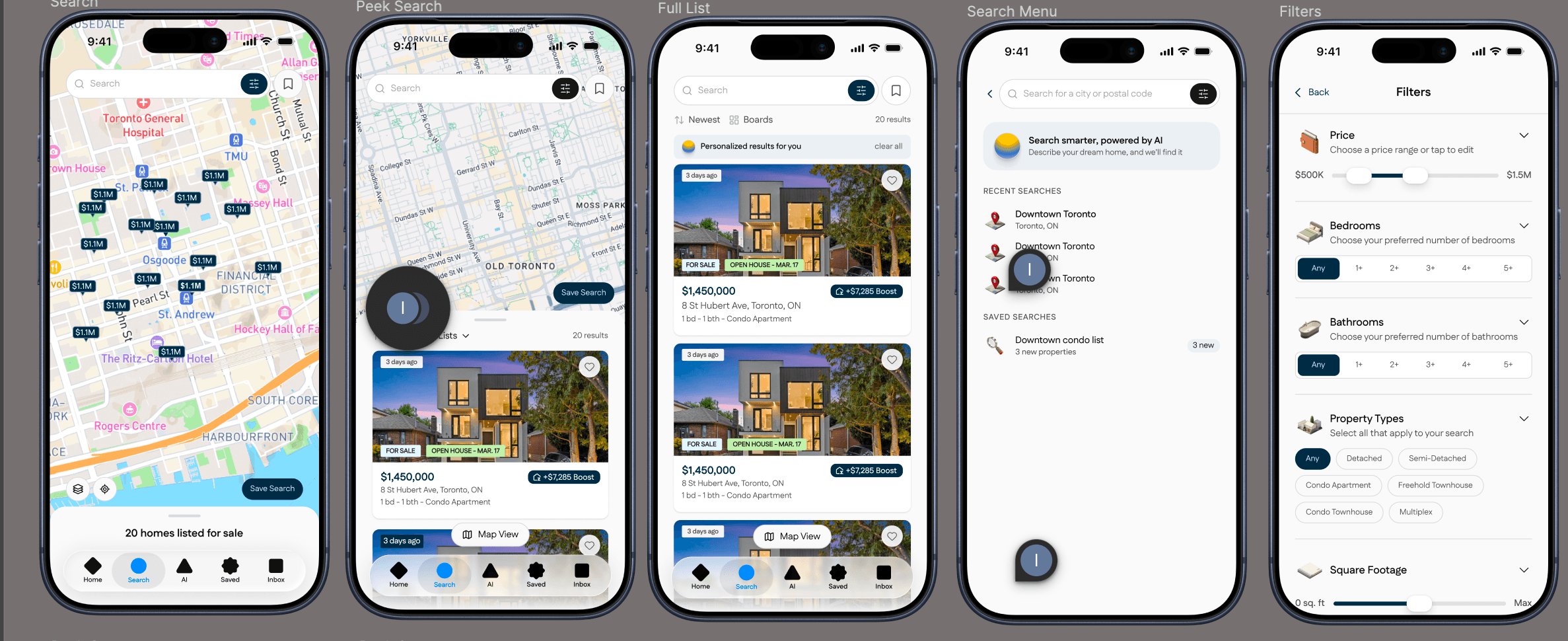

I was the only designer on the project, but in practice I was also functioning as PM — defining requirements, prioritizing scope, and making tradeoffs. An engineer scaffolded the infrastructure. Once the foundation was in place, I took over the component logic, interactions, and search system directly in code.

The Insight

Every major real estate platform follows the same interaction model: pick a city, then filter. It's how Zillow works. It's how Realtor.ca works. But it's backwards — it forces people to translate how they think into how the system thinks.

People don't speak in filters. Nobody says "location: Toronto, bedrooms: 3, maxPrice: 800000." They say things like:

Sarah · First-time buyer

Marcus · Relocating from Ottawa

Priya · Growing family

David · Investor

Inspired by patterns from 1,280+ sales transcripts

I wanted to flip that. Instead of making users learn the system's language, I designed a search system that learns theirs.

Three Layers of Parsing

The core design decision: most queries don't need AI at all. I designed a search system with three layers — instant, smart, and deep. The system resolves what it can for free in milliseconds, and only escalates when it has to.

This is a design decision about cost, speed, and user experience — not just an engineering choice. By resolving the predictable parts instantly, AI spend stays low and scales sustainably. As the cache grows, more and more queries resolve without AI at all.

The Handshake

Complex queries take time. When someone types "2 bed walkable to restaurants with good schools nearby," the system is doing real work — parsing, escalating, resolving. That delay is noticeable.

The UX insight: latency is inevitable with AI, but uncertainty is a choice. I designed the search bar to provide instant feedback the millisecond the user types, by lighting up tags for known patterns via regex immediately.

The system performs a visual "handshake" with the user. It confirms "I understood that part," buying the patience needed to process the complex AI query that follows.

The regex tags light up immediately. The AI indicator appears for the ambiguous parts. The user sees progress, not a loading spinner. By the time AI finishes, the user already trusts the system understood them.

The Camera Director

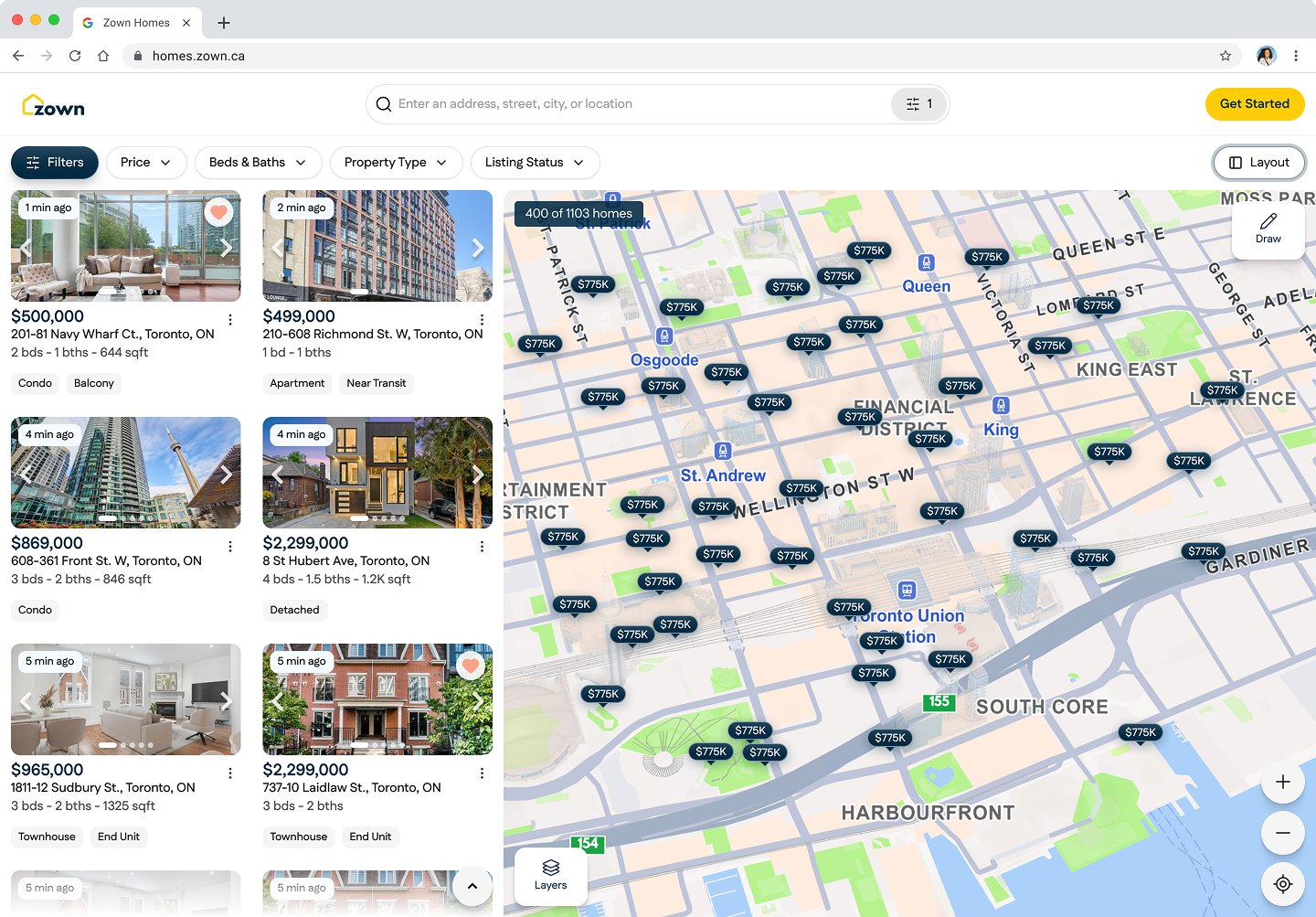

Most maps are passive — they just center on a coordinate. I built a "Camera Director" that interprets the scale of the user's query to programmatically frame the viewport. The system doesn't just look for "where," it looks for "how big."

A search for "near Yonge & Finch" zooms to a neighborhood. "Condo near the CN Tower" frames downtown. "Semi detached near Kennedy Station" shifts east. The map movement also helps mask AI processing time — by the time the camera finishes its transition, results are ready.

| Query Type | Example | Zoom | Camera Behavior |

|---|---|---|---|

| Neighborhood | "near Yonge & Finch" | ~14 | Tight frame, street-level |

| Landmark | "condo near the CN Tower" | ~13 | District frame, downtown |

| Transit radius | "15 min from Union Station" | ~11 | Wide frame, computed radius |

| City-wide | "homes in Toronto" | ~10 | Full city |

What This Enabled

The system gets smarter with every search. Every resolved location is cached. Every new phrasing strengthens the regex layer. Over time, the majority of queries resolve without AI at all — the marginal cost per search trends toward zero.

Search stopped being a utility and became the product.